A Seamless Maintenance Solution: AWS Elastic File Store for Oracle Database Logs

One of our clients is a global financial software specialist that streamlines and automates financial accounting processes. We host and manage their Software-as-a-Service (SaaS) based applications and leverage our AWS Engineers and Solution Architects to help build and manage the infrastructure as customer instances are on-boarded to AWS to support their ongoing operations.

One of the challenges we had with the Application Database was to estimate the size required for the file system to store archived redo logs. The allocated space was filling up frequently due to huge archived redo logs generation. Customer financial systems load the data and the calculation engine running on an Oracle database calculated various computations and populated the application transaction tables. This resulted in a high volume of transactions on the database, which created more change tracking logs, which caused the storage to frequently fill up and the database to go into a hung state. This caused all the scheduled jobs to fail. The amount of logs generated in a day was huge due to the change tracking feature on the database used to support point-in-time recoveries. So, turning off the archive logs was not a feasible option. We then setup various methods to clean up but our efforts were not that helpful. We also requested users to limit the data they loaded, or use a time window in a day to load, to help draw some predictability and monitor or estimate the capacity. No users were interested in trying that approach because the nature of the application and its reporting needs to be flexible for the end users to load data at any time and report against it.

Hence, for a seamless maintenance solution, we migrated the EBS file system to test using EFS (Elastic File System) volume to host the archived redo log files. Amazon EFS file systems can automatically scale from gigabytes to petabytes of data without needing to provision storage.

One EFS volume was shared across all Production Databases, which allowed more processes to write, thereby improving the I/O throughput. First, we tested on Non-Production databases, and later implemented it on the Production databases when the performance was acceptable.

EFS helped to ease maintenance and avoid the database to hang, which in turn improved the overall availability and gained operational efficiency.

With a retention of 3 days logs on EFS volume, for production databases, we could significantly reduce the Recovery Time Objective (RTO) for point-in-time recoveries. Also, we have enabled script based file sync between EFS drives in another region, and limited the snapshots transfer between the AWS regions. This has saved significant costs on data transfer.

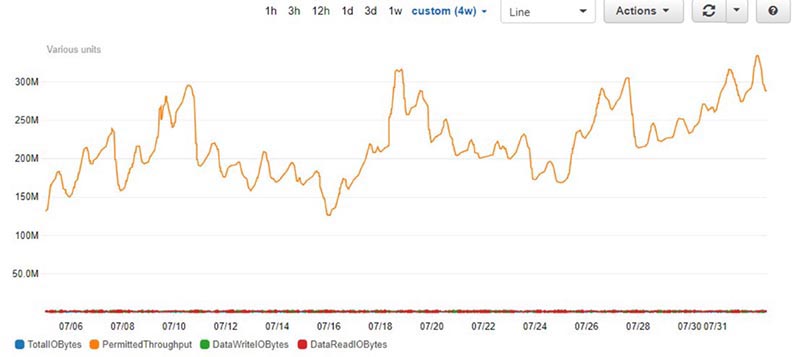

Below metrics shows the performance stats of the live EFS storage:

More description on the metrics are found on below link:

https://docs.aws.amazon.com/efs/latest/ug/monitoring-cloudwatch.html